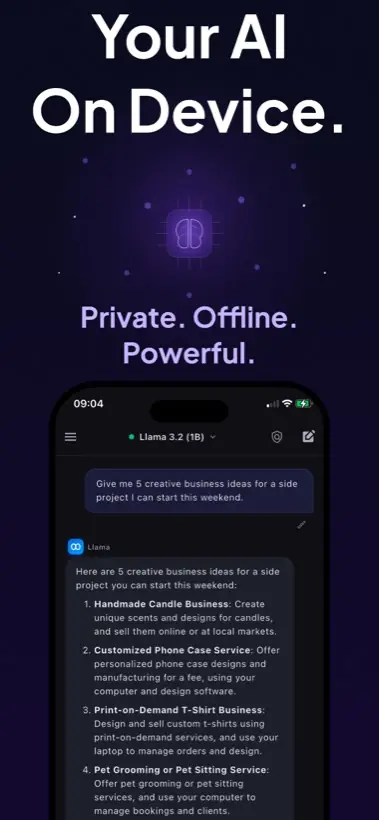

Quick answer: Start with a small, mobile-friendly model. Local AI Chat mentions built-in model families such as Gemma, Qwen, and SmolLM, and supports compatible GGUF imports such as Llama, Mistral, and Phi for users who want more control.

The mobile model rule

On desktop, people often chase bigger models. On iPhone, the better first question is: will this model answer quickly enough that I will keep using it? A smaller model that runs smoothly is usually better than a large model that feels heavy.

How to choose

Model size matters more than the brand name

A model family name tells you very little without size, quantization, context length, and device RAM. A compact quantized file may feel excellent on a phone. A larger file may use more storage, respond slowly, and heat the device.

- Start small. Confirm that local AI is useful for your daily tasks.

- Increase only when needed. Move to larger models if quality is not enough and speed remains acceptable.

- Keep a fast fallback. A quick model is valuable for travel, notes, and everyday questions.

- Test your own prompts. Benchmarks do not know your notes, screenshots, or writing style.

What about GGUF imports?

GGUF is a common local model format documented by Hugging Face and used widely in the local AI ecosystem. Local AI Chat supports importing compatible GGUF models by URL, which gives advanced users a route to try different model families on iPhone and iPad.

Best model for most iPhone users

The best starting point is a built-in mobile-friendly model from the app, not a random huge file. Once you understand your speed and quality needs, experiment with compatible GGUF imports.

Recommendation: Use the fastest model that gives acceptable answers for your real workflow. On mobile, that usually beats chasing the biggest model name.

Sources and useful references

For format context, see Hugging Face's GGUF documentation, Google's Gemma 4 announcement, and the llama.cpp project.